Google AdTech: towards an ad hoc procedure for the design and implementation of remedies

The Commission Decision in Google Ad Tech exemplifies, better than any other, the shift in the centre of gravity of competition law enforcement towards remedies (see here for a more extensive discussion of this point). It also shows that remedy design is probably the single most important aspect to address in the ongoing reform of Regulation 1/2003.

In the current economic and technological landscape, a finding of infringement no longer marks the end of a case. This milestone is, as they say, just the end of the beginning. The effectiveness of enforcement, in digital and others markets, depends less on establishing an abuse of dominance than it does on ensuring that the infringement is brought to an end by means of a workable and well-crafted remedy.

Towards a structured framework for the design and implementation of remedies

The approach that the Commission is following in Google AdTech is a (welcome) innovation against this background. It has implemented what is, in effect, an ad hoc procedure to define the obligations with which the firm must comply. As this post is being finished, the Commission and Google are in the midst of conversations in the context of this informal procedure (see here for a recent update on the matter).

The aftermath of the decision suggests that the Commission acknowledges that merely ordering a firm to bring the infringement to an effective end no longer does the trick, and neither does a ‘principles-based’ approach that requires an undertaking to abide by an open-textured standard (such as non-discrimination). As I have written elsewhere, the complexity of remedies cannot be wished away by authorities.

Even if only de facto for the time being, Google AdTech suggests that the design and implementation of the remedy will, from now on, follow a structured framework that is independent from the finding of infringement.

Pursuant to the Automec doctrine, this ad hoc procedure gives the firm the chance to come up with a proposal to bring the infringement to an effective end. On the other hand, it allows the Commission to evaluate whether the undertaking’s proposal indeed addresses the concerns identified in the decision.

Where necessary (and this is the crucial third step), the Commission will specify the obligations with which the firm must comply (just like it does in the context of a commitments procedure). On this point, it has clearly signalled that it may adopt structural measures breaking up Google’s adtech business.

What matters, irrespective of the nature of the remedy, is that the duties imposed upon the firm are spelled out clearly and in sufficient detail to ensure immediate and full compliance.

The need for a structured framework: the experience of Google Shopping

The experience of the past few years shows that a framework for the specification of remedies is indispensable for the effective operation of the competition law regime. Delegating the design and/or implementation of remedies to firms is in nobody’s interest, neither that of the competition authority, nor the addressees of the decision nor of third parties potentially benefitting from intervention.

More importantly, it is now difficult to dispute that the ‘principles-based’ approach to remedial action has failed. The uncertainty to which it gives rise has major practical consequences, which were dramatically exposed last week (see here).

Google Shopping is a case where controversy around compliance with the remedy never really went away. Whether or not the measures implemented by the firm back in 2017 brought the infringement effectively to an end has always been (and remains) contentious.

It is against this background of perpetual limbo that a German court awarded damages to two of Google’s rivals on the market for price comparison last week. Crucially, the damages award extends to the period following the adoption of the Commission decision, and is therefore based on the assumption that the firm never actually complied with the principles-based remedy imposed.

Even though the Commission never opened non-compliance proceedings against Google, the absence of a decision formally and positively declaring that the infringement had been brought to an effective end paved the way for the award of damages beyond 2017.

This judgment suggests that any remedy implemented following a ‘principles-based’ approach is potentially vulnerable to challenge and, as such, a source of legal and non-legal uncertainty.

Moving forward: codifying the framework

The Commission’s introduction of an ad hoc remedial framework in Google AdTech is therefore the right way forward. Acknowledging the realities and demands of contemporary industries is not just wise but indispensable. It would be desirable if the ad hoc remedies procedure were improved along three dimensions.

First, transparency. The exchanges between the firms and the authority should benefit from similar levels of openness as those observed in the context of commitment procedures. What the firm proposes as per Automec and the authority’s assessment of the proposal should not happen behind closed doors.

Second, third-party involvement. One of the advantages of the commitments procedure within the meaning of Article 9 of Regulation 1/2003 is that obligations are ‘market-tested‘ with stakeholders. A structured procedure would give these stakeholders a meaningful chance to provide input about the proposals and to express their concerns, if any.

Third, codification. Ideally, this procedure would be enshrined in a reformed Regulation 1/2003. As a second best, it could at least be codified at the internal level (the 2019 version of the Manual of Procedure was, say, succinct about the issue or remedies and would definitely benefit from a revamp and further detail).

EDITORIAL: Law and technocracy to protect democracy

An editorial of mine has recently come out with the Journal of European Competition Law & Practice (JECLAP). It is available here. The point it makes is simple: the first instinct of illiberal regimes is arguably to disregard the law and ignore expertise (whether it relates to climate change, vaccine safety or any other issue).

I argue, against this background, that, if competition law is to play a role in the protection of democracy and its institutions, it must resist the temptation to abandon law and expertise in the name of expediency or effectiveness. Such a move is not only likely to weaken policy-making and affect its quality, but would inevitably backfire over the long run.

I look forward to your thoughts on it. I explored the same ideas in the keynote lecture delivered in honour of Heike Schweitzer a few months ago (see here).

NEW PAPER: How Android Auto reshapes the law of refusal to deal (and what it means in practice)

I have uploaded on ssrn a new paper (see here) a paper that discusses the judgment of the Court of Justice in Android Auto as well as its substantive and institutional implications. I am pleased that the paper received yesterday the AdC Competition Policy Award, organised every year by the Portuguese Competition Authority.

The way in which Android Auto has changed the law of refusal to deal, it seems to me, may not have been fully appreciated. To make this point, it is sufficient to apply the Court’s reasoning in Android Auto to the facts at stake in Bronner. The latter would have been decided differently; evidence of indispensability would not have been required to establish an abuse.

As I explain in the paper, this difference is a function of the way in which Android Auto (re)interprets the indispensability condition. In Bronner and Magill, whether or not the dominant firm had kept the assets ‘for the needs of its own business‘ was assessed by reference to the relevant market concerned by the refusal. It was therefore irrelevant that the TV channels in Magill were licensing their copyright to newspapers (but not weekly magazines) or that Mediaprint was printing and distributing another publication in Bronner at the time of the facts.

The analytical approach followed in Android Auto construes the indispensability condition differently. According to the new doctrine, where the dominant firm is dealing with a third party in market A, it can no longer invoke indispensability in relation to a refusal concerning market B. For the same reason, the judgment has significantly reduced the scope of the refusal to deal case law.

This transformation has obvious implications for digital markets. Dominant players in these markets often run systems that are partially open and partially closed. This said, the criteria followed by the Court suggest that the ruling is likely to have an impact in other industries and markets.

In any event, the implications of the judgment go beyond the shrinking of the refusal to deal doctrines. Android Auto allows for intervention that is not confined to a mere duty to deal. A dominant firm may indeed be required not just to share an input or infrastructure with third parties, but to redesign its assets by taking into account the demands of the said third parties. In this sense, the operation of the infrastructure becomes a cooperative venture.

I look forward to your comments on the paper. And thanks again to the AdC!

JOB OPENING: want to join LSE Law School as an Assistant Professor of Competition Law?

LSE Law School is looking for an Assistant Professor of Competition Law, to join the Faculty in September 2026. All information in terms of requirements, conditions and on how to apply can be found here: https://www.jobs.ac.uk/job/DOZ822/assistant-professor-in-law-competition-law.

Needless to say, the competition law team could not be more excited about the prospect of enhancing our teaching and research capabilities in the field.

I can tell from experience that LSE Law School is a non-hierarchical environment that allows you to flourish and find your voice as a scholar. Teaching and administrative duties are light in the first few years (up until promotion to an Associate Professorship). In addition, you will benefit from an annual (and generous) research allowance.

You may be asked to contribute to teaching in foundational subjects, but our competition law curriculum is large and varied. In addition to a dedicated undergraduate module, the LSE Law School now offers an LLM programme with an ample array of competition law options, ranging from State aid and subsidies to the regulation of competition in digital markets.

If you are interested in applying and would like to have an informal chat about the post, do not hesitate to get in touch with me.

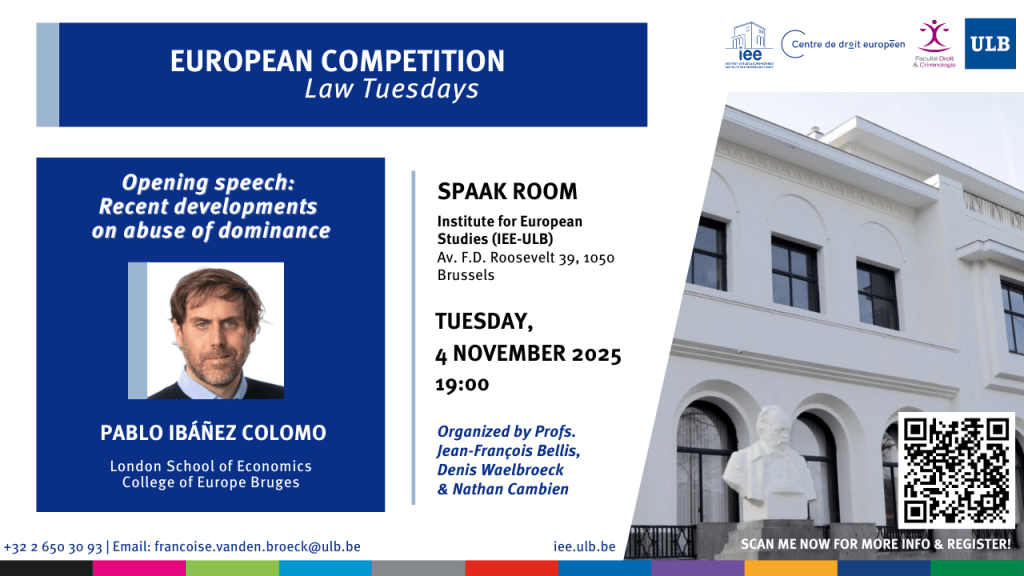

SAVE THE DATE: Recent developments on abuse of dominance (Brussels, 4th November at IEE-ULB)

With every new academic year comes a new edition of the mardis du droit de la concurrence, This series has been around pretty muchsince the dawn of European competition law. It continues to be a unique venue to get a sense of the evolution and direction of travel of our field, more recently under the expert leadership of Denis Waelbroeck and Jean-François Bellis (now joined by the great Nathan Cambien).

The programme for the new academic year is now out (see here). It includes talks that have become classic fixtures, such as Fernando Castillo de la Torre‘s overview of the case law in the area of cartels and provides a complete tour d’horizon of all areas of enforcement, featuring both Commission officials and private practitioners.

Advocate General Laila Medina will be closing this year’s series with a *lundi du droit* (11th May 2026) with a speech on ‘EU competition law and the Court of Justice‘.

I am honoured (and grateful) to have been invited to open this year’s series on Tuesday 4th November (7pm Brussels time) with a discussion on the recent developments on abuse of dominance. More info on can be found on the flyer above. I really look forward to seeing many of you there!

COMING SOON: The New Law of State Aid and Subsidies (Hart Publishing)

This blog has been neglected to such an extent in the past few months that some of you got in touch to ask whether everything was OK. Fortunately, the only reason I did not get around to sharing thoughts more often is that I was absorbed in the writing of The New Law of State Aid and Subsidies.

As the very cover suggests, it is the second volume of ‘The New’ trilogy, the first instalment of which was The New EU Competition Law. If you were wondering, I hope it will be followed by a monograph on merger control, which is also undergoing fundamental transformations.

I submitted the manuscript to Hart in the early days of summer, and The New Law of State Aid and Subsidies already has a dedicated webpage: https://www.bloomsbury.com/uk/new-law-of-state-aid-and-subsidies-9781509990306/.

The new instalment of the trilogy shares the philosophy of the preceding one: a relatively short, affordable (it will come out in paperback from the beginning) and hopefully accessible volume that is modular by design (in the sense that individual chapters can be read independently of one another).

This is a book that I have been meaning to write for a long time. In more ways than one, it is the fruit of many years teaching State aid and subsidies at the LSE Law School. Providing a conceptual framework to convey the essence of the discipline to students approaching it for the first time is a real challenge. Some chapters (such as the one devoted to selectivity) reflect many hours of class discussions and are no doubt enriched by students’ contributions.

Other chapters seek to trace the various ways in which the law of State aid and subsidies is undergoing fundamental changes. There are, as I explain in the introduction to the book, at least three ways in which this field can be said to be ‘new’:

First, the law is new in a literal sense. The EU Foreign Subsidies Regulation and the UK Subsidy Control Act are novel pieces of legislation that share some features with the EU State aid regime but also depart from it in a number of respects. There is a dedicated chapter addressing each of these developments (and have been able to incorporate, inter alia, the new draft guidelines on foreign subsidies).

The law is also new in another sense. Following what I call the ‘permacrisis’ of EU State aid policy (where COVID was followed by the invasion of Ukraine, and this against a background of deglobalisation) a ‘new normal’ has emerged (epitomised by the Clean Industrial Deal Framework) that suggests that it may no longer be possible to turn back the clock to the pre-COVID era.

Finally, the tax rulings saga, the symbolic culminating point of which is the Court judgment in Apple (delivered exactly a year ago), ventured into issues that tested the boundaries of Article 107(1) TFEU and, more generally, the ability of the regime to address harmful tax competition. The saga has been so central to the development of EU State aid law that there is a whole chapter devoted to it.

I very much hope we will be able to celebrate the launch of the book, in London, Bruges and beyond, in the new year, and look forward to sharing those moments with many of you. Stay tuned in the meantime!

Announcing the 6th edition of the Rubén Perea Writing Award

Five years ago, our friend and colleague Rubén Perea Molleda passed away, just as he was about to embark on a promising career in competition law following his graduation from the College of Europe. Rubén’s memory remains vivid for everyone who knew him. To honour his memory, we established a competition-law and economics writing prize, which is already in its sixth edition.

As in previous years, the award-winning paper will be published in a special issue of the Journal of European Competition Law & Practice, together with a selection of the best submissions (the JECLAP special issue featuring the winner and finalists of the 5th edition will be out very soon; many articles are already available in Advanced Access). The winner may also receive the award from Executive Vice-President Teresa Ribera.

Who can participate?

In principle, you may only participate if you have not reached the age of 30 by the submission date (i.e., if you were born after 15 October 1995). Undergraduate and postgraduate students, as well as scholars, public officials and practitioners are all invited to participate. This award celebrates promising academic writing from individuals at the beginning of their careers. Our aim is to support and spotlight emerging voices. Thus, if your career path is non-traditional and you believe you align with the spirit of this award, we welcome a brief explanation for consideration here.

What papers can be submitted?

You may submit a single-author unpublished paper on competition law, policy, or economics, which is not under consideration elsewhere. The paper may be specifically prepared for the award, but it may be a redraft of an undergraduate or postgraduate dissertation. Please follow the following instructions: (1) the paper must not exceed 15,000 words (including footnotes, knowing that there is no need to include a no bibliography); (2) follow the JECLAP house-style rules, which can be found here; (3) please include a short summary in the form of 3-4 key points (single-sentence bullet points).

For your information, the Jury will assess your paper based on the following three main criteria: (1) clarity of writing; (2) depth of research; and (3) innovativeness. This year, the Jury will consist of Petra Nemeckova, Alfonso Lamadrid, Gianni De Stefano, Philip Marsden, Eugenia Brandimarte, Nicolas Fafchamps, David Perez de Lamo, and Lena Hornkohl.

How to submit?

Please submit the paper via this link: https://mc.manuscriptcentral.com/jeclap. IMPORTANT: As you go through the submission process, make sure that in Step 5, you answer YES to the question “Is this for a special issue?”, and indicate that your submission relates to the Rubén Perea Award.

What is the DEADLINE?

Papers have to be submitted by 23.59 (Brussels time) on 15 October 2025.

JECLAP Special Issue: Competition Law and Gender Perspectives

JECLAP is particularly proud to announce the publication of a Special Issue devoted to Competition Law and Gender Perspectives. The initiative owes a great deal to the indefatigable Lena Hornkohl, who has worked together with European Commission officials Johannes Holzwarth and Senta Marenz (both of whom are involved in DG Comp’s Equality Network).

The issue can be accessed here. It comes with an editorial by Lena, Johannes and Senta. As you can see from the list below, the pieces are invariably fascinating and cover a great deal of ground (with the added plus that many of them are available in Open Access). We very much hope this issue will contribute to raising the salience of gender issues in competition law.

Gender and Competition Law: An Exploration of Feminist Perspectives, by Giorgio Monti (Tilburg University)

Building a More Inclusive and Gender-proof Competition Law in Europe, by Baskaran Balasingham, Italo Leone and Linda Senden (Utrecht University)

Gender Inclusive Priority Setting in Competition Law Enforcement, by Kati Cseres (University of Amsterdam) and Or Brook (University of Leeds)

Influence of Gender Perspectives on the Work of Competition Authorities, by Lisa Leitner, Corinna Potocnik-Manzouri, and Nora Schindler (Bundeswettbewerbsbehörde Austria)

Diversity and Cartel Governance, by Johannes Blaschczok and Shazana Rohr (LMU Munich)

At first Blush—Taking a Competition Lens to Healthcare Pink Taxes, by Neha Georgie (Compass Lexecon Singapore)

Bridging the Innovation Gender Gap: Considerations under EU Merger Control, by Ece Ban (University of Oxford) and Carolina Banda (MPI for Innovation and Competition Munich)

Monopsony Power, Competition Law, and Women’s Informal Labour Markets in Latin America, by Germán Oscar Johannsen and Jeniffer Rodriguez (MPI for Innovation and Competition Munich)

Feminist Legal Education and Teaching Competition Law, by Mary Catherine Lucey (University College Dublin)

Can restorative remedies be imposed in EU antitrust law? Should they?

Remedies are now at the forefront of legal and policy discussions. As discussed here, this reality reflects a shift in the centre of gravity of enforcement (in the EU and beyond). The administrative priorities of authorities and the economic features of digital markets demand the administration of obligations that are regulatory in nature (such as the functional or structural unbundling of activities, access obligations and the determination of wholesale and retail prices).

A bare bones cease-and-desist order does the job in oligopolistic markets, but is often unable to deliver in industries (such as digital) that feature monopolies or quasi-monopolies across one or several levels of the value chain.

Authorities and commentators are catching up with this emerging landscape. For a long time, remedies were, at best, an afterthought (and not just in the literature). There is therefore every reason to welcome the structured conversation on the topic that is organically taking place.

The recent Report on the ex post evaluation of EU antitrust remedies, produced for the Commission, exemplifies the new trend. In addition, scholarship addressing remedy design and implementation is growing and taking fascinating new directions every time.

Is there room for restorative remedies in EU antitrust law?

Interestingly, the rise of remedies as a central theme in the EU antitrust arena has revealed that some basic issues are yet to be addressed systematically. Surprising as it may sound, something as fundamental as the very purpose of enforcement is not fully clear. For instance, many of the most recent contributions to the literature assume as self-evident that the point of remedies is (or can be) restorative in nature.

What authors mean by restorative is not always clear (there is a ‘thick’ and a ‘thin’ version of the concept, as I explain in the article cited above). The above said, the concept means, at a minimum, that remedial action should recreate the conditions of competition that existed prior to the infringement. Even in its more modest incarnation, therefore, restorative intervention would go beyond merely bringing the infringement to an end. It would require turning back the clock to where things were in terms of structure and competition dynamics.

If one reads carefully the EU case law, however, there is precious little support for the idea that restorative remedies can be imposed in EU antitrust law. One could take the argument further: the judgments that are typically relied upon to substantiate the claim suggest, if anything, that restorative intervention is, as the law stands, outside the reach of courts and authorities (at least so when they enforce Articles 101 and 102 TFEU).

This conclusion is particularly apparent from AKZO, which, paradoxically, is frequently mentioned in support of the proposition that remedial action can be restorative. As part of the remedy package in the case, the dominant firm was required to refrain from engaging in selective price-cutting.

The purpose of this measure was not to restore the conditions of competition that existed prior to the abuse. The Court (see para 155) is even explicit about the fact the remedy did not seek to re-allocate to ECS the customers it had lost to AKZO (which would have made it restorative in nature). The more modest ambition of the obligation was simply to bring the infringement to an effective end and prevent the abuse from taking place again.

Another common ruling that is mentioned in support of restorative intervention in EU antitrust law is Ufex, where the Court of Justice held that where the ‘anti-competitive effects continue after the practices which caused them have ceased’, the Commission ‘remains competent’ to ‘to act with a view to eliminating or neutralising’ them.

The best way to make sense of this passage is to take a look at the precedent to which it refers, namely Continental Can. This venerable classic of EU competition law concerned the abusive acquisition of a rival. In the specific circumstances of the case, the infringement could only be brought to an effective end by mandating a divestiture. The intervention was a mere adaptation of the nature of the infringement (a one-off) and its effects (which were not a one-off and thus lasted until intervention took place).

We are yet to see a judgment of the Court of Justice addressing the question of whether remedial intervention can go beyond merely bringing the infringement to an end. A good opportunity would have been Lithuanian Railways, as the one of the alternatives identified by the Commission in its decision was the restoration of the railway track. However, the remedy was not challenged by the dominant firm in its appeal (and, when contested before the General Court, the dominant firm did not claim that such a remedy exceeded the scope of the authority’s powers under Regulation 1/2003).

Should remedies be restorative in nature?

The fact that, as the law stands, there is little support for restorative intervention does not mean that such measures are necessarily beyond the reach of antitrust courts and authorities. Similarly, it does not rule out the possibility that the Court will accept them in the future. It simply means that one cannot take for granted that restorative remedies are feature of the system (or that a court or a competition authority are not acting decisively when they choose not to impose such measures).

It also means, more to the point, that it is necessary to have a conversation about their desirability. The arguments in favour of restorative remedies are well-known and definitely have force to them. In markets that change rapidly and that naturally tends towards concentration, merely bringing the infringement to an end may achieve little in some instances. If the role of antitrust law is to be taken seriously, the argument goes, it means that restorative intervention is a necessity, at least in some instances.

Arguments against this form of intervention are no less powerful. The single most persuasive one has to do, as usual, with the sheer complexity that comes with the design and implementation of restorative measures. We have witnessed in the past few years that getting remedies right in the digital arena is a complex task, even for the most sophisticated and well-resourced of authorities.

It is not clear that adding more demands to the already stretched antitrust institutions will be beneficial for the system. In this sense, I have always been of the view that creating the expectation that competition law can achieve more than it realistically is able to deliver is particularly harmful to its credibility (and its status in the EU legal order at large).

There is another factor to consider in this debate, which is equality of treatment. Would restorative remedies be imposed in some cases but not others? If so, on what basis? Could the matter be left to the discretion of the court or authority? One should not forget, in this sense, that the principle of equal treatment in the context of remedial action has featured prominently in the case law (most notably in the old ice cream cases, Langnese-Iglo and Schöller)

What matters, as I say, is to get the conversation started and discuss meaningfully the pros and cons of restorative intervention. Your comments, as ever, would be most welcome.

CALL FOR ABSTRACTS | JECLAP Special Issue on the DMA

The Digital Markets Act has been up and running for a while. We have witnessed, over the past year, the designation (and non-designation) of operators as gatekeepers, as well as the first judgments interpreting the Regulation. With this week’s non-compliance decisions against Apple and Meta, the regime may be entering a fascinating new era.

At JECLAP, we feel that the time is right to take stock of the evolution of the Digital Markets Act in the form of a Special Issue. It has been a lifetime since we last published on the regime (the Regulation itself had not even been adopted at the time).

JECLAP welcomes the submission of abstracts for consideration on the substantive, institutional and procedural aspects of the DMA, including (but not limited to) the following:

- The criteria for the designation of firms as gatekeepers.

- Emerging interpretation of the various substantive obligations (Articles 5 to 7).

- Judicial review of administrative action in the DMA.

- Procedural aspects.

- Interaction with competition law.

- Private enforcement of the DMA.

- Comparative perspectives.

If you have an idea for a paper, please email Gianni De Stefano (Gianni.De-Stefano@ec.europa.eu) or myself (P.Ibanez-Colomo@lse.ac.uk) by Friday 9 May with your proposal.

This proposal should take the form of an abstract of not more than 250 words in which you outline:

- The issue you would like to address;

- The angle you intend to take;

- The contribution your piece is expected to make; and

- Whether you have any actual or potential conflicts of interest (more information on what and when to disclose can be found here).

If your abstract is accepted (we will let you know as soon as possible), we expect the final article (ideally of around 7,000-10,000 words) to be submitted by mid-July at the latest.

As always, we will select abstracts to maximise diversity and balance in the Special Issue. We are, as ever, keen to give a voice to new authors and innovative approaches. If there was any doubt: we welcome legal and economic perspectives (and certainly papers that combine both).

In the meantime, do not hesitate to get in touch with any questions or suggestions!